Computer Organization and Architecture explores the structural elements of computing systems, from basic components to advanced parallel processing concepts, often found in PDF format.

Historical Context and Evolution

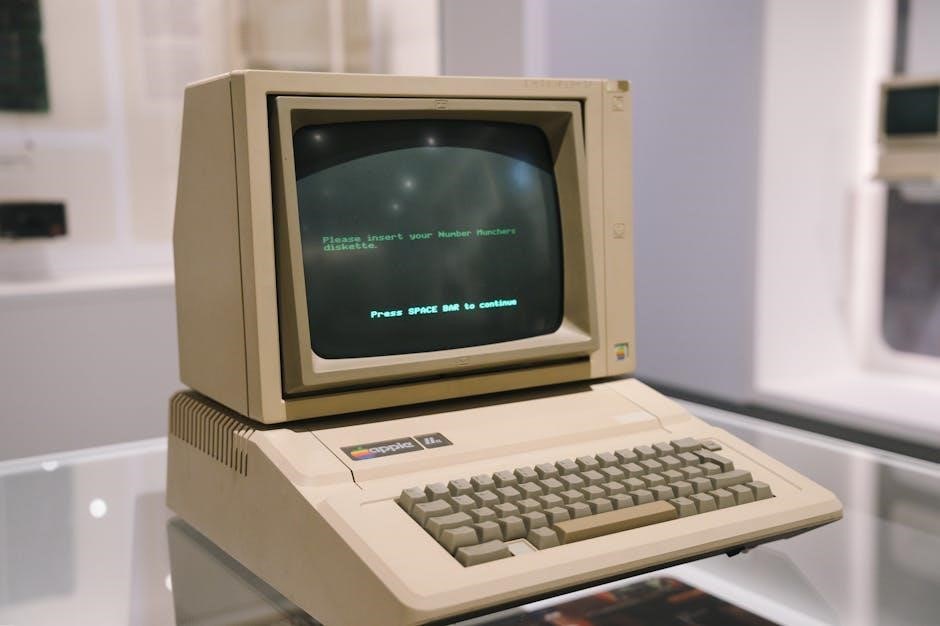

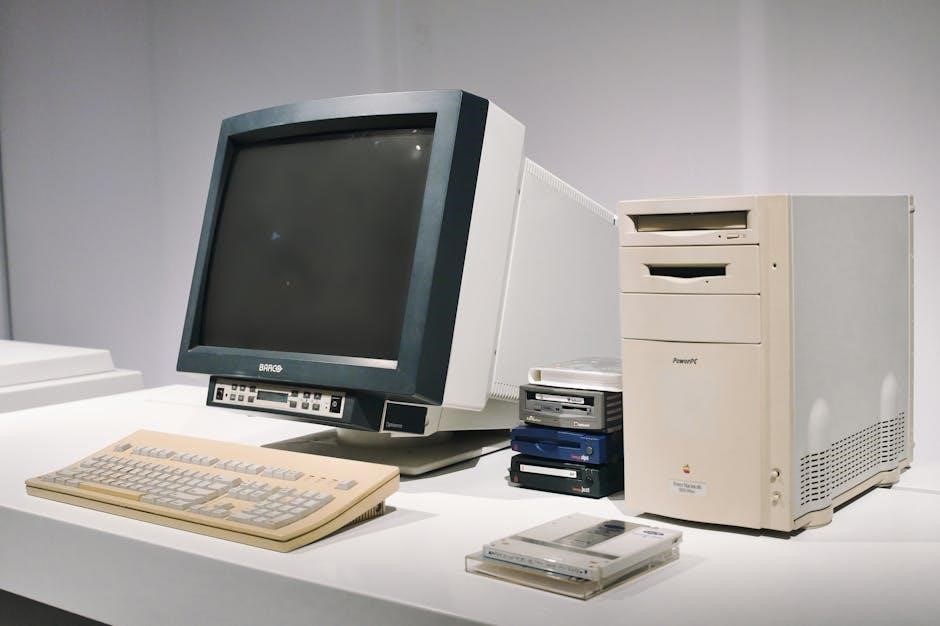

Early computing relied on mechanical devices, evolving through electromechanical relays and vacuum tubes. The advent of the transistor in the late 1940s, and subsequently the integrated circuit, revolutionized computer design, enabling miniaturization and increased complexity.

Early architectures, like the von Neumann architecture, established fundamental principles still prevalent today – a single address space for both instructions and data.

PDF resources detailing this evolution showcase the shift from bulky, power-hungry machines to the sophisticated, energy-efficient systems we use now. The progression includes the development of RISC and CISC architectures, impacting instruction set design and CPU functionality; Studying these historical trends, often documented in comprehensive PDFs, provides crucial context for understanding modern computer organization.

Importance of Studying Computer Organization

Understanding computer organization is vital for anyone involved in software development, system administration, or hardware engineering. It provides insights into how software interacts with hardware, enabling optimization and efficient resource utilization.

Analyzing architecture allows for informed decisions regarding system design, performance tuning, and troubleshooting.

PDF resources on this topic equip students and professionals with the knowledge to evaluate different architectures, like RISC versus CISC, and comprehend the impact of pipelining and parallel processing. This knowledge is crucial for building robust, scalable, and high-performing computing systems. A solid grasp of these fundamentals, often found in detailed PDFs, is essential for innovation in the field.

Basic Computer Components

Core components – CPU, memory (RAM, ROM, cache), and I/O devices – form the foundation of any computing system, detailed in many computer organization and architecture PDFs.

Central Processing Unit (CPU)

The Central Processing Unit (CPU) is the brain of the computer, responsible for executing instructions. Computer organization and architecture PDFs extensively cover its internal workings. It fetches instructions from memory, decodes them, and performs arithmetic and logical operations.

Understanding the CPU’s role is crucial, as it directly impacts system performance. These resources detail how the CPU interacts with other components, like memory and I/O devices. The CPU’s design, including its control unit and arithmetic logic unit (ALU), is a central focus. PDFs often illustrate the instruction cycle – fetch, decode, execute – and various addressing modes used to access data. Modern CPUs employ techniques like pipelining and parallel processing to enhance speed and efficiency, concepts thoroughly explained in dedicated materials.

Memory Hierarchy (Cache, RAM, ROM)

The Memory Hierarchy is fundamental to computer performance, a key topic in computer organization and architecture PDFs. It consists of multiple levels – Cache, RAM (Random Access Memory), and ROM (Read-Only Memory) – each differing in speed, cost, and capacity.

Cache memory, the fastest and most expensive, stores frequently accessed data. RAM provides volatile storage for active programs and data. ROM holds permanent instructions, like the boot program. PDFs detail how these levels work together to provide efficient data access. Understanding this hierarchy is vital, as it impacts how quickly the CPU can retrieve information. These resources explain concepts like cache mapping, replacement policies, and the trade-offs between different memory types.

Input/Output (I/O) Devices

Input/Output (I/O) Devices are crucial for a computer system’s interaction with the external world, a core subject within computer organization and architecture PDFs. These devices facilitate data transfer between the computer and its surroundings.

Input devices, like keyboards, mice, and scanners, feed data into the system. Output devices, such as monitors and printers, display or produce results. PDFs often detail I/O interfaces, including ports and buses, and how the CPU communicates with these devices. Understanding I/O is essential for comprehending system performance and data flow. Resources explain concepts like interrupt handling and Direct Memory Access (DMA), optimizing data transfer efficiency and overall system responsiveness.

CPU Structure and Function

CPU Structure and Function, detailed in computer organization and architecture PDFs, covers the instruction cycle, control units, and addressing modes for efficient processing.

Instruction Cycle

The instruction cycle, a fundamental concept within computer organization and architecture – often detailed in comprehensive PDF resources – is the process a CPU follows to execute a single instruction. This cycle typically consists of several key phases: Fetch, Decode, Execute, and Store.

During Fetch, the instruction is retrieved from memory. Decode interprets the instruction’s opcode and operands. The Execute phase performs the operation specified by the instruction, utilizing the CPU’s arithmetic logic unit (ALU) or other components. Finally, in the Store phase, the results are written back to memory or registers.

Understanding this cycle, as presented in various computer organization and architecture PDFs, is crucial for grasping how software interacts with hardware and how performance can be optimized through techniques like pipelining.

Addressing Modes

Addressing modes, a core topic in computer organization and architecture – frequently covered in detailed PDF documentation – define how the operand of an instruction is located. These modes dictate how the CPU interprets the address part of an instruction. Common modes include Immediate, where the operand is directly within the instruction; Direct, using a memory address; and Indirect, referencing a memory location containing the operand’s address.

Other modes like Register (operand in a register), Register Indirect, and Indexed (address calculated from a base address and an index) offer flexibility. Understanding these modes, as explained in computer organization and architecture PDFs, is vital for efficient memory access and program optimization, impacting performance and code portability.

Hardwired Control Unit

A Hardwired Control Unit, detailed in many computer organization and architecture PDFs, implements control signals using gates and flip-flops. This approach offers speed due to its direct implementation – signals are generated directly by the hardware. However, it lacks flexibility; modifying the instruction set requires physically rewiring the unit.

These units are typically used in simpler, fixed-function processors where instruction sets are unlikely to change. Computer organization and architecture resources emphasize that the design process involves carefully crafting the logic circuits to generate the correct sequence of control signals for each instruction. This contrasts sharply with microprogrammed control, offering a trade-off between speed and adaptability.

Micro-programmed Control Unit

A Micro-programmed Control Unit, extensively covered in computer organization and architecture PDFs, utilizes a sequence of microinstructions stored in a control memory. Each instruction initiates a series of micro-operations, offering greater flexibility than hardwired control. Modifying the instruction set is achieved by altering the microprogram, without hardware changes.

While slower than hardwired control due to the memory access overhead, microprogramming simplifies complex instruction sets and facilitates easier modifications. Computer organization and architecture materials highlight that the control memory holds the microprogram, dictating the sequence of operations. This approach is favored in systems requiring adaptability and complex control sequences.

Instruction Set Architecture (ISA)

Instruction Set Architecture (ISA), detailed in computer organization and architecture PDFs, defines the instructions a processor can understand and execute, like RISC versus CISC.

RISC vs. CISC Architecture

Reduced Instruction Set Computing (RISC) and Complex Instruction Set Computing (CISC) represent contrasting approaches to processor design, extensively covered in computer organization and architecture PDFs. CISC architectures, historically dominant, employ a large and varied instruction set, aiming to accomplish tasks with fewer instructions – though often at the cost of increased complexity.

RISC, conversely, prioritizes a smaller, streamlined instruction set, emphasizing simplicity and efficiency. This leads to faster execution speeds and easier pipelining. Modern processors often blend aspects of both, utilizing CISC instruction sets but internally decomposing them into RISC-like micro-operations. Understanding these trade-offs is crucial when studying processor design, as detailed in comprehensive learning materials.

Instruction Formats

Instruction formats define how instructions are represented in binary code, a core concept within computer organization and architecture, often detailed in PDF resources. These formats typically include fields for the opcode (specifying the operation), operands (data or addresses), and addressing modes. Common formats include fixed-length, where all instructions have the same size, and variable-length, offering flexibility but requiring more complex decoding.

The arrangement of these fields impacts instruction size, execution efficiency, and the complexity of the control unit. Understanding instruction formats is essential for comprehending how the CPU fetches, decodes, and executes programs. PDFs dedicated to this subject provide detailed diagrams and examples illustrating various format structures.

Pipelining

Pipelining, a crucial technique in computer organization and architecture, enhances performance by overlapping instruction execution stages, as detailed in many PDF guides.

Basic Concepts of Pipelining

Pipelining dramatically improves CPU throughput by dividing instruction execution into distinct stages – fetch, decode, execute, memory access, and write-back – allowing multiple instructions to be processed concurrently. Imagine an assembly line; each stage works on a different instruction simultaneously.

This contrasts with non-pipelined execution where one instruction must fully complete before the next begins. While individual instruction latency remains the same, the overall instruction completion rate increases significantly. Resources are utilized more efficiently, boosting performance.

Numerous computer organization and architecture resources, often available as PDF documents, illustrate this concept with diagrams and examples. Understanding these stages and their interactions is fundamental to grasping modern processor design and optimization techniques. The goal is to maximize pipeline efficiency.

Pipelining Hazards (Data, Control, Structural)

Pipelining efficiency can be hampered by hazards – situations preventing the next instruction in the pipeline from executing during its designated clock cycle. Data hazards occur when an instruction depends on the result of a previous, not-yet-completed instruction. Control hazards arise from branch instructions, where the next instruction’s address isn’t known until the branch is resolved.

Structural hazards happen when multiple instructions require the same hardware resource simultaneously. These hazards necessitate techniques like stalling, forwarding, or branch prediction to maintain pipeline flow. Many computer organization and architecture texts, frequently found as PDF resources, detail these mitigation strategies.

Effectively managing these hazards is crucial for realizing the performance benefits of pipelining. Understanding their causes and solutions is a core component of processor design.

Parallel Processing

Parallel processing, covered extensively in computer organization and architecture resources – often available as a PDF – utilizes multiple processors for enhanced computational speed.

Parallel processors represent a significant advancement in computer organization and architecture, moving beyond the limitations of single-processor systems. These systems employ multiple processing units to concurrently execute instructions or parts of a program, dramatically increasing throughput and reducing execution time. Resources like comprehensive computer organization and architecture PDF materials detail various approaches to parallel processing.

The core idea is to divide a complex task into smaller, independent subtasks that can be processed simultaneously. This contrasts with sequential processing, where tasks are executed one after another. Understanding parallel processors is crucial for tackling computationally intensive problems in fields like scientific computing, data analysis, and artificial intelligence. These concepts are thoroughly explained within detailed academic texts and readily available PDF guides.

Shared Memory Multiprocessors

Shared memory multiprocessors represent a key architecture in parallel processing, detailed extensively in resources like a computer organization and architecture PDF. These systems consist of multiple processors that share a single, common address space for both instructions and data. This shared memory allows processors to communicate and synchronize efficiently, facilitating collaborative problem-solving.

Access to the shared memory is typically managed through hardware mechanisms like caches and memory controllers, ensuring data consistency and minimizing contention. Programming these systems often involves careful consideration of synchronization primitives, such as locks and semaphores, to prevent race conditions. Studying these concepts, often found in comprehensive PDF guides, is vital for developing high-performance parallel applications.

Computer Arithmetic

Computer arithmetic, detailed in a computer organization and architecture PDF, focuses on numerical operations within computers, including integer and floating-point representations.

Integer Representation

Integer representation, a core topic within computer organization and architecture – often detailed in comprehensive PDF resources – explores how computers store and manipulate whole numbers. This involves understanding various methods like sign-magnitude, one’s complement, and two’s complement. Two’s complement is the most prevalent due to its simplified arithmetic operations and single representation of zero.

These methods dictate how positive and negative integers are encoded in binary format. The choice of representation impacts the range of representable numbers and the complexity of arithmetic circuits; A computer organization and architecture PDF will typically delve into the advantages and disadvantages of each method, alongside practical examples demonstrating addition, subtraction, and overflow conditions. Understanding these concepts is crucial for optimizing performance and ensuring accurate calculations within computer systems.

Floating-Point Representation

Floating-point representation, a critical component detailed in computer organization and architecture materials – frequently available as a PDF – addresses the storage of numbers with fractional parts. Unlike integers, floating-point utilizes a scientific notation-like format, consisting of a sign, exponent, and mantissa (or significand). The IEEE 754 standard is the most widely adopted, defining formats like single-precision (32-bit) and double-precision (64-bit).

A computer organization and architecture PDF will explain how these components work together to represent a wide range of values, both very large and very small. It will also cover topics like normalization, rounding errors, and special values like NaN (Not a Number) and infinity. Understanding floating-point is essential for applications requiring precise numerical calculations, such as scientific simulations and graphics rendering.

Leave a Reply